The Frontier Labs Talk AI Safety, But New Analysis by Bloomberg and Glass.AI Tells a Different Story.

Artificial intelligence (AI) presents a transformative moment for society, but the number of people focused on making sure it’s safe looks relatively small, according to a new opinion piece by Bloomberg.

When the AI labs (OpenAI, Anthropic, Google DeepMind and xAI) declined to provide stats on their personnel, Bloomberg asked Glass.AI to investigate — which we did by deep reading LinkedIn profiles, company websites, news articles and other online sources to identify staff focused on safety-related work. That includes tasks like making sure the AI tools are

aligned with human values.

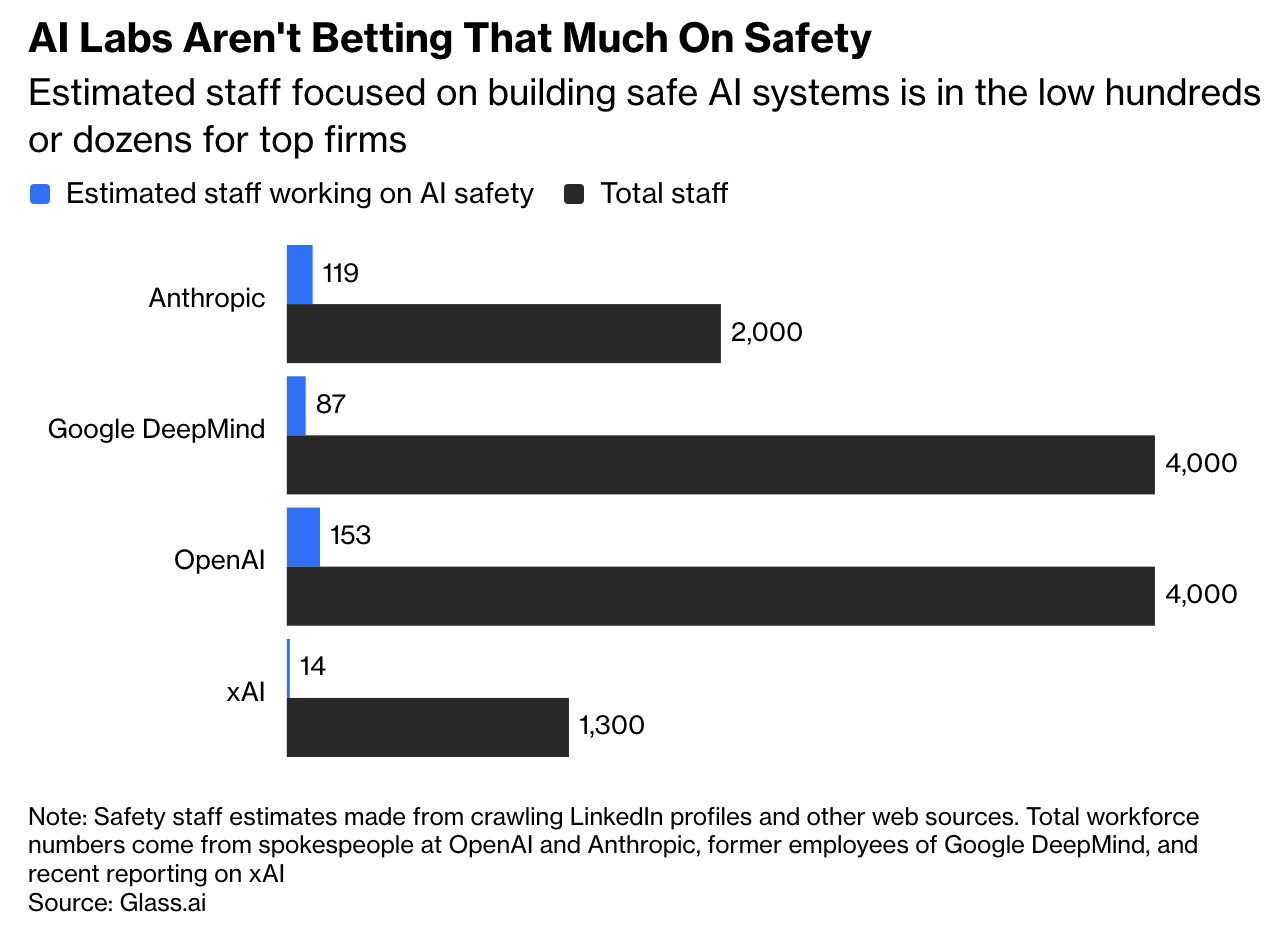

Our deep research for Bloomberg suggests that only 373 people are working full-time on making artificial intelligence systems safe and trustworthy at the four major AI labs, which have over 11,000 employees.

The AI firms have made grand pronouncements about human welfare, but the real measure of their commitment to safety comes from where they put their money, with investment into safety-oriented roles looking like a small fraction of the money going into making their systems more powerful.

To our surprise, OpenAI appears to have more people working on AI safety than Anthropic. xAI shows very little focus on AI safety.

Here is the link to the Bloomberg Opinion piece written by best-selling author, Parmy Olson.

If you would like to learn more about our discovery and tracking capabilities, get in touch at info@glass.ai